Drum & Bass CycleGAN: examples

Link to the ML4MD @ ICML2019 programme.

What's this?

This web page illustrates the results of applying the CycleGAN technique to transform musical extracts between two genres of drum and bass music. Essentially, this generates a very rudimentary "remix" of a song from one genre into the other. For this, it only needs access to the raw audio -- no DAW or anything like that is involved in this process!

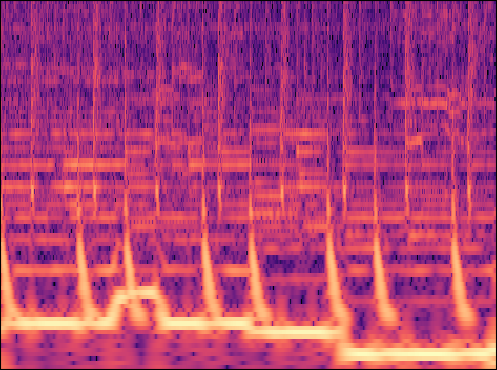

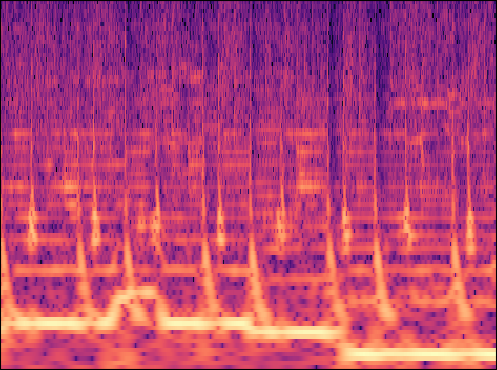

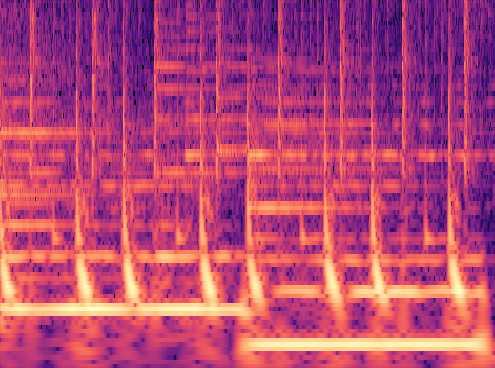

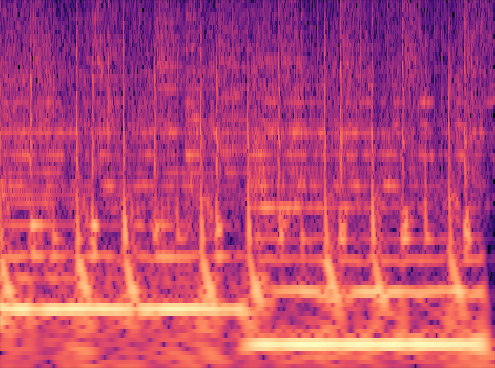

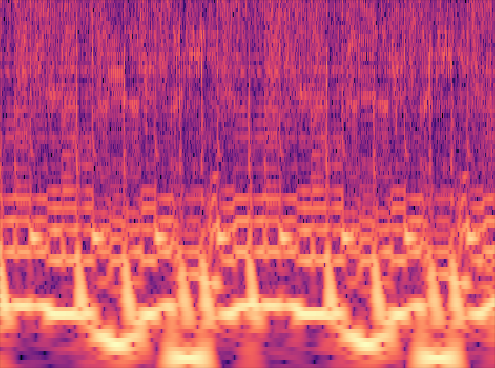

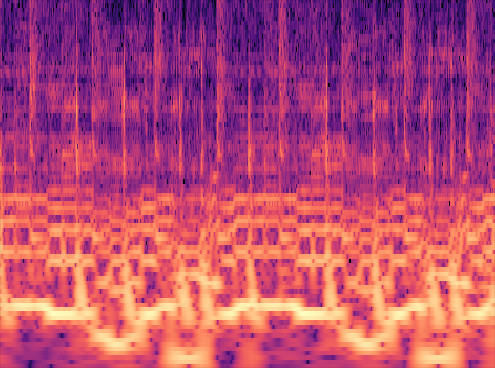

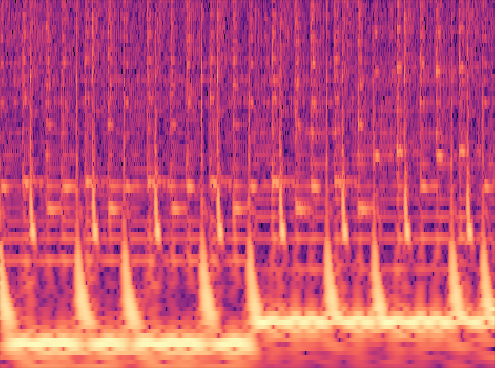

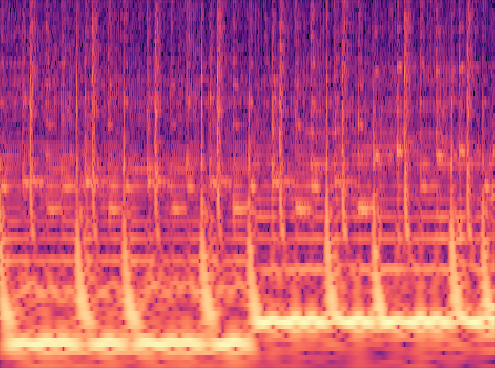

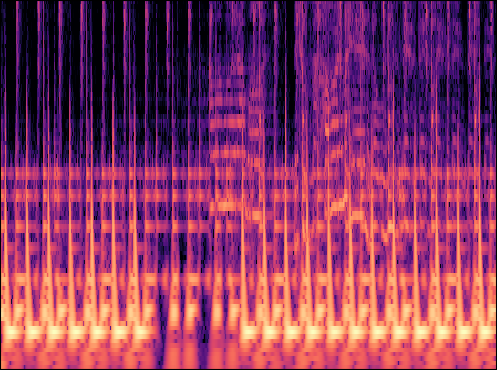

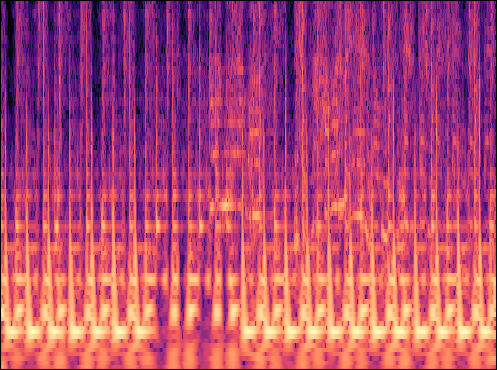

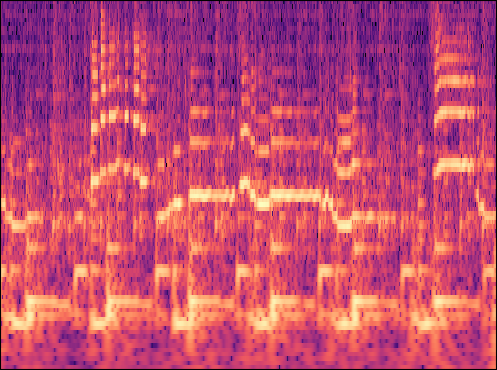

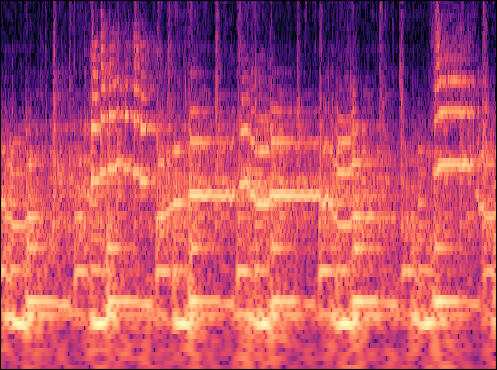

The column on the left shows short extracts of original drum and bass songs. Each audio extract is also represented visually using a so-called spectrogram, which illustrates the spectral energy as a function of the frequency (vertically; bass frequencies at the bottom, high frequencies at the top of the image) and time (horizontally, left to right).

The column on the right shows the result of transforming the music from the left column into the other genre: liquid drum and bass extracts are transformed into dancefloor and vice versa.

When transforming from liquid to dancefloor, the model learned to add more noise in the high frequency regions, creating an "open hi-hat" sound. It also makes the snare drums more pronounced, giving them a more "punchy" sound.

When transforming from dancefloor to liquid, the model learned to remove noise in the high frequencies, giving the hi-hats a "closed" sound, and to make the snare drum sound "shorter" and "brighter".

Note that these transformations were learned by the model automatically.

Example from the paper

Song: Technimatic - The Nightfall

More examples

Song: Hybrid Minds - Meant To Be

Examples in other genres

The examples below show the result of applying the trained model to other music genres. Note that the model is trained on drum and bass music only, yet that the application to these other genres still applies the same transformations on the snare drums and hi-hats.

Song: Reinier Zonneveld - Things We Might Have Said

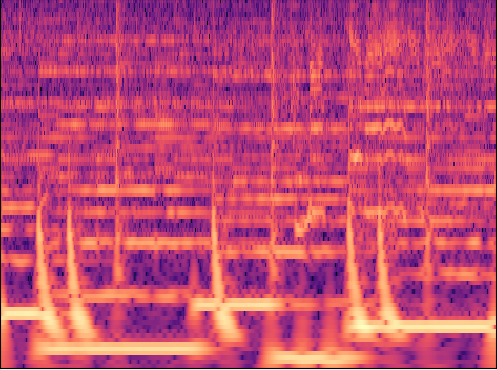

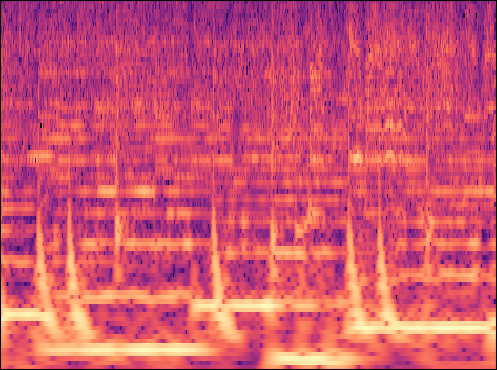

Phase reconstruction: original phase vs. Griffin-Lim

The model operates on amplitude spectrograms, and in order to restore the audio, the spectral phase needs to be estimated. A common approach is to use phase reconstruction algorithms such as the Griffin-Lim algorithm [1], but these were found to yield qualitatively unsatisfying results. As a solution, the generated amplitude spectrogram is combined with the spectral phase of the original audio recording, which yields results of a better quality.

Original:

Transfered to dancefloor:

Original:

Transfered to dancefloor:

These examples are generated by applying the Griffin-Lim phase reconstruction algorithm to resp. the original (CycleGAN input) amplitude spectrogram and the generated (CycleGAN output) amplitude spectrogram.

[1] Griffin, D., & Lim, J. (1984). Signal estimation from modified short-time Fourier transform. IEEE Transactions on Acoustics, Speech, and Signal Processing, 32(2), 236-243.